Visit NAP.edu/10766 to get more information about this book, to buy it in print, or to download it as a free PDF.

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.

This chapter introduces the subject of instrumentation in general; defines the particular instrumentation that is the subject of this report, advanced research instrumentation and facilities (ARIF); and gives examples of ARIF used in various fields.

Instruments have revolutionized how we look at the world and refined and extended the range of our senses. From the beginnings of the development of the modern scientific method, its emphasis on testable hypotheses required the ability to make quantitative and ever more accurate measurements—for example, of temperature with the thermometer (1593), of cellular structure with the microscope (1595), of the universe with the telescope (1609), and of time itself (to discern longitude at sea) with the marine chronometer (1759). Instruments have been an integral part of our nation’s growth since explorers first set out to map the continent. The establishment of the US Geological Survey had its roots in the exploration of the western United States, and its activities depended critically on advanced surveying instruments.

A large fraction of the differences between 19th century, 20th century, and 21st century science stems directly from the instruments available to explore the world. The scope of research that instrumentation enables has expanded considerably, now encompassing not only the natural (physical and biologic) world but

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.

also many facets of human society and behavior. Instrumentation has often been cited as the pacing factor of research; the productivity of researchers is only as great as the tools they have available to observe, measure, and make sense of nature. As one of the committee’s survey respondents commented,

without continued infrastructure support…. We will see many young investigators changing the nature of the projects and science they do to areas that have less impact but assure better chances of success. The lack of instruments or the ability to upgrade aging local facilities simply dictates the science done in the future. 1

Cutting-edge instruments not only enable new discoveries but help to make the production of knowledge more efficient. Many newly developed instruments are important because they enable us to explore phenomena with more precision and speed. The development of instruments maintains a symbiotic relationship with science as a whole; advanced tools enable scientists to answer increasingly complex questions, and new findings in turn enable the development of more powerful, and sometimes novel, instruments.

Instrumentation facilitates interdisciplinary research. Many of the spectacular scientific, engineering, and medical achievements of the last century followed the same simple paradigm of migration from basic to applied science. For example, as the study of basic atomic and molecular physics matured, the instruments developed for those activities were adopted by chemists and applied physicists. That in turn enabled applications in biological, clinical, and environmental science, driven both by universities and by innovative companies. A number of modern tools that are now essential for medical diagnostics, such as magnetic resonance imaging scanners, were originally developed by physicists and chemists for the advancement of basic research.

Borrowing from the terminology used by Congress in its request, the committee’s study focuses on the issues surrounding a particular category of instruments and collections of instruments, referred to as advanced research instrumentation and facilities. In the charge to the committee, ARIF is defined as instrumentation with capital costs between $2 million and several tens of millions of dollars. In that range, there is no general instrumentation program at either the

Pettitt, Montgomery. Response to Committee Survey on Advanced Research Instrumentation, 2005.

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.

National Science Foundation (NSF) or the National Institutes of Health (NIH), yet they are the primary federal agencies that support instrumentation for research outside the national laboratories. The instruments and facilities in this price range fall under neither NSF’s Major Research Instrumentation program nor its Major Research Equipment and Facilities Construction account. The committee found that there are no general ARIF programs at the other federal funding agencies.

The committee identifies other characteristics of ARIF that should be part of its definition. Many qualities distinguish ARIF from more easily acquired instrumentation. The capital cost of ARIF is not its distinguishing factor, and thus many of the characteristics of and challenges associated with ARIF may apply to instruments and facilities costing less than $2 million. ARIF are

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.

“Advanced research instrumentation provides a technological platform to answer the hardest, unanswered questions in science. An investment on the order of magnitude of 10 or 100 million dollars will pay off many times over if it opens up opportunities to discover new sources of energy, cures for diseases, etc. Beyond potential revenue generating applications, having access to advanced research instrumentation also opens up avenues for fundamental discoveries, the implications of which may be currently unfathomable.”

Melissa L. Knothe Tate

Case Western Reserve University

Response to Committee Survey on Advanced Research Instrumentation

ARIF includes both commercially available instruments and specially designed and developed instruments and both physical and nonphysical tools. Specially developed instruments are assembled from many less expensive components to make a new, more advanced and powerful instrument. ARIF may be single standalone instruments, networks, computational modeling applications, computer databases, systems of sensors, suites of instruments, and facilities that house ensembles of interrelated instruments. A number of different funding mechanisms support the wide diversity of ARIF.

ARIF can be loosely categorized in two distinct types of use. Some are “workhorse” instruments, essential to everyday research and training. Others are “racehorse” instruments, newly developed or constantly developing, that are perched on the cutting edge of research. Racehorse instruments, because they are novel, are often easier to justify to potential funders. Both workhorse and racehorse instruments are vital for research, and finding the right balance between the two is a challenge.

The “facilities” portion of “ARIF” was incorporated by the committee to emphasize that some research fields and problems require collections of advanced research instrumentation. As has been described earlier, the changing face of research has demanded that a wide array of instruments be brought to bear on a single problem. Collections of instruments are often essential for meaningful research to occur. To solve some types of scientific problems or to engineer new materials, multiple instruments are necessary to carry out a series of steps or processes. Complementary instruments are more effective when housed side by side and may be far more productive than each one is individually.

Historically, centralized facilities have played a large role in research involving

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.

ARIF instrumentation by consolidating resources, increasing collaboration, and making available rare or unique resources to a large number of users. Most publicly funded centralized facilities are located at universities and national laboratories. The state of US research facilities is often cited as an indicator of the nation’s long-term international competitiveness in research. For example, a 2000 National Academies Committee on Science, Engineering, and Public Policy study of materials facilities noted that “rapid advances in design and capabilities of instrumentation can create obsolescence in 5–8 years.” The study further noted that the overall quality of characterization services provided by materials facilities supporting universities and industry had “lost substantial ground” to Japan and Europe. 2 The committee distinguishes between facilities and centers. A center is defined as a collection of investigators with a particular research focus. A facility is defined as a collection of equipment, instrumentation, technical support personnel, and physical resources that enables investigators to perform research.

In referring to ARIF, the committee excludes facilities that house large assemblies of unrelated or only loosely related equipment and that generally require no targeted support staff. Different research fields require different types of ARIF, and some fields have a larger demand for ARIF than others. ARIF are not distinguished by the diversity of research fields or geographic regions it supports.

The research community recognizes the importance of instruments. The National Science Board (NSB) recently identified eight Nobel prizes in physics that were awarded for the development of new or enhanced instrumentation technologies, including electron and scanning tunneling microscopes, laser and neutron spectroscopy, particle detectors, and the integrated circuit. 3 Nobel prizes for the development of instrumentation have been awarded in chemistry and medicine for instrumentation related to nuclear magnetic resonance (NMR) or magnetic resonance imaging, and many were also awarded at least in part for the development of ARIF. Many of the ground-breaking instruments that qualified for a Nobel prize or contributed to Nobel prize-winning work began as ARIF and through development have become widely available and more affordable.

Table 2-1 lists the types of ARIF reported to the committee in its survey of institutions. The results of this survey are known to be incomplete and not repre-

National Academies. Experiments in International Benchmarking of U.S. Research Fields. Washington, DC: National Academy Press, 2000.

National Science Board. Science and Engineering Infrastructure for the 21st Century: The Role of the National Science Foundation. Arlington, Va.: National Science Foundation, 2003.

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.

TABLE 2-1 Examples of ARIF, by Field

Field

Selected Instruments or Facilities

Capital Cost (millions of $)

Astronomy

Telescope, spectrograph, infrared camera for Magellan

Biology

Proteomics-protein structure laboratory

Cyberinfrastructure

Geosciences

Ion microprobe, earthquake sensor testing laboratory

Materials

Electron-beam lithography system, semiconductor production system

Human and animal imaging

Magnetic resonance imager, human and animal

Spectrometry (NMR)

NMR spectrometer, 800-900 MHz

Physics

Infrared camera, pulsed electron accelerator

Space

MegaSIMS (isotope analysis)

Facility-supporting equipment

Helium refrigerator supplying helium for superconducting magnets at the National Superconducting Cyclotron Laboratory

Source: Committee on Advanced Research Instrumentation survey of academic institutions, 2005.

sentative of the state of ARIF on university campuses. Notably absent from this table, for example, are cybertools other than supercomputers, which cost much to develop but little to use. Computer modeling programs are used often by the chemistry and biology community. The cost of acquiring these computational modeling programs is often negligibly small, but the cost of creating them is often substantial. The computational chemistry program, NWChem, for example, cost around $10 million to create and is distributed free. Further details about the committee’s survey and the ARIF reported in it can be found in Appendix C. Table 2-1 is followed by descriptions of several types of ARIF.

Imaging technologies provide many of the best-known examples of the evolution of modern instrumentation. Fifty years ago, studies of the effects of magnetic fields on the nuclear spin states of molecules were at the forefront of esoteric physics research. The earliest magnetic resonance spectrometers were inexpensive to build (they could literally be cobbled together by graduate students from spare radar parts); this was fortunate because measuring nuclear spin properties had no conceivable application. If sensitivity and instrument performance had stayed the same, NMR still would have no conceivable application.

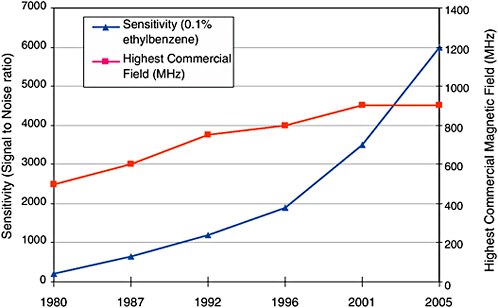

Instead, today magnetic resonance is a fundamental technique for biological imaging and the most important spectroscopic method for chemists, the only one that measures the structure of proteins in their natural environment (in solution). Since 1980, the sensitivity of the best commercially available NMR spectrometers

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.

FIGURE 2-1 Historical capability of NMR spectrometers.

Source: Razvan Teodorescu, “Bruker Biospin Magnets.” Presentation to the National Research Council Committee on High Magnetic Field Science, December 8, 2003.

has improved by a factor of 30. With that advance alone, NMR spectra could be acquired 900 times faster today than 25 years ago. Improvements in resolution and pulse sequences make the advances in NMR spectrometry even more dramatic. Figure 2-1 shows how NMR resolution and sensitivity have progressed since 1980.

Modern, very-high-field NMR spectrometers (high fields help to resolve the many atoms found in large molecules) are complex instruments; the most advanced machines today cost millions of dollars. The next generation, which pushes to still higher magnetic field strengths, will require a concerted effort in superconductor physics and radiofrequency design but will create even further dramatic extensions of the applicability of the technique. 4

The pioneers of magnetic resonance would never have dreamed that 50 years later the International Society of Magnetic Resonance in Medicine would have 2,800 papers and 4,500 attendees at its annual meetings. Today, magnetic resonance imaging is a mainstream diagnostic tool, and functional magnetic resonance

National Research Council. Opportunities in High Magnetic Field Science. Washington, DC: National Academies Press, 2005.

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.

NMR Spectrometer

NMR spectrometers probe materials and biological processes at the molecular and nano-scale to give information on the three-dimensional structure and dynamics of molecules. This instrument is routinely used in chemistry, materials science, biology, and clinical medicine. NMR spectrometry is used to study everything from DNA to disease-causing proteins. Information obtained from NMR studies aids in the development of new drugs.

imaging (literally, watching people think) promises to revolutionize neuroscience and neurology. Again, the applications and the expense are intimately coupled to the sophistication of the technology: a modern, commercially available 4 Tesla whole-body magnetic resonance imager can easily cost $10 million to acquire and site, and it requires highly specialized technical staffing to maintain its perfor-

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.

Magnetic Resonance Imager

The magnetic resonance imager is used to take pictures of the body. A major current application of such an imager is in brain research. While the general areas of the brain where speech, sensation, memory, and other functions occur are known, the exact locations vary from individual to individual. This instrument is used by neurobiologists in the recently developed high-speed “functional imaging” mode to map out precisely which part of the brain is handling critical functions such as thought, speech, movement, and sensation. These experiments, though not straightforward, have started a revolution in our knowledge of brain function.

mance. For the institutions that responded to the committee’s survey, the annual cost of operation for magnetic resonance imagers averaged 10% of the capital cost.

Modern methods in optical and x-ray imaging also reflect the evolution from physics to more applied science. They are not simply descendants of van Leeuwenhoek’s crude microscope and Röntgen’s x-ray hand picture; they embody

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.

and enhance our understanding of molecular and cellular structure and function. It is only a slight exaggeration to say that the most important application of the crude lasers of the 1960s was as inspiration for science-fiction television and movie weapons. Today, advanced laser systems permit microscopy hundreds of times deeper into tissue than would be possible with an ordinary microscope, and they are central to the rapidly growing field of molecular imaging.

The National Cancer Institute has identified molecular imaging as an “extraordinary opportunity” with high scientific priority for cancer research. The most promising approach is the development of new technologies and methods to improve the imaging and molecular-level characterization of biologic systems.

In the committee’s surveys of researchers and institutions, NMR spectrometers were among the most commonly cited individual instruments, and advanced models were among the most commonly sought. The availability of ARIF in general was of concern to many researchers for whom access to increasingly advanced instruments was the key to advancing science and providing solutions to societal problems. The NMR spectrometer, as it becomes more and more sophisticated, exemplifies the issue. As one researcher noted,

there is an increasing need for advanced research instrumentation in many fields. Many instruments that start out appearing to be expensive and esoteric rapidly become mainstream. The good side of this is that these instruments fuel impressive scientific results. The bad side is that scientists who do not have access to these instruments tend to fall behind in terms of their results and in what experiments they can propose in grant applications.

… Five or six years ago, few labs had access to very high field spectrometers (750 MHz or above), but now the field has been pushed ahead to where many … projects require such instrumentation. A significant number of researchers have access to these machines, but many either don’t have access or must drive/fly long distances to obtain access. While on paper it sounds fine to ask a researcher to travel to a high field spectrometer, in practice this is very cumbersome and does not lead to cutting edge results. For any particular NMR project, a dozen or more different NMR experiments must be carried out on a sample…. Traveling back and forth to a “richer” or better endowed university is not conducive to getting results. 5

One of the major accomplishments of science in the 20th century was the deciphering of the human genome. That achievement made it possible to understand the molecular basis of human life in unprecedented detail. The potential for

LiWang, Patricia. In response to National Academies Advanced Research Instrumentation Survey, 2005.

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.

X-ray Crystallography

X-ray crystallography is an experimental technique that uses x-ray scattering off of molecules or atoms in a crystal to make a model of the molecule or crystal. This technology is pivotal for obtaining knowledge of protein structures, which is a prerequisite for rational drug design and for structure-based studies that aid the development of effective drugs.

improving health and curing disease has already been demonstrated, but most of the benefits remain to be seen. The achievement will provide the basis of discoveries far into the future.

The genetic information in DNA is stored as a sequence of bases, and DNA sequencing is the determination of the exact order of the base pairs in a segment of DNA. Two groups, in the United States and the United Kingdom, first accomplished sequencing in 1977 and were awarded the 1980 Nobel prize in chemistry. However, their approaches were time-consuming and labor-intensive. Further

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.

Proteomics

Proteomics is the identification, characterization, and quantification of proteins, and its applications include drug discovery and targeting, whole proteome analysis of any organism, agriculture, and the study of protein complexes, gene expression, and disease. The mass spectrometer is used to determine the structure and chemical nature of molecules, including proteins, and can be used to find the concentration of known molecules and identify unknown ones. Mass spectrometry can identify compounds even if they are present in very low concentrations. It is powerful in a wide range of applications, including the detection of environmental contaminants, establishing the purity of food and industrial products, locating oil deposits, and studying materials brought back from space missions. Two-dimensional electrophoresis is used to isolate proteins for further study with mass spectrometry.

advances came in 1986-1987 with the development of fluorescence-based detection of the bases. That led quickly to automated high-throughput DNA sequencers that were soon commercialized and made generally available to the research community. However, the speed of those devices was still not sufficient to decode the human genome in any reasonable amount of time.

Beginning in 1990, the pressures of approaching the daunting task of sequencing the human genome produced a number of new advances, which resulted in a fully automated high-throughput parallel-processing device that was 10 times faster than the older method. The progress and success of the Human Genome Project constitute a case study in instrumentation and of how, without develop-

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.

Beamlines

A synchrotron is a large machine (about the size of a football field) that accelerates electrons to almost the speed of light. The electrons are deflected through magnetic fields thereby creating extremely bright light. This light is channeled down beamlines to experimental workstations where it is used for research. Beamlines are used to examine samples of microscopic matter, analyze ultradilute solutions, and to observe what happens during chemical or biological reaction over very short timescales. Knowledge gained from synchrotron-based studies could someday lead to pollution-free electric trains levitated by superconductivity, atom-sized factories, and molecular-sized machines.

ment, it can become a pacing factor for research. The leaders of the sequencing efforts at the Department of Energy Office of Science and NIH recognized that the existing technology was not capable of sequencing fast and cost-effectively. As a result, they invested substantially not only in researchers but in the further development of sequencing technologies. The tandem approach proved very successful.

Genome sequencing requires the assembly of millions of fragments into a complete sequence. By itself, the mechanical process of sequencing was not sufficient to map the human genome in a reasonable time. The project was aided by computer algorithms developed by researchers in the late eighties and early nineties. The confluence of hardware and software development made it possible to complete the human genome sequence years before it had been considered possible. It also provided a general approach to large-scale sequencing that has resulted in the understanding of the genomes of a wide variety of organisms. The knowledge of genomes of a number of organisms has vastly accelerated discovery in basic biology research.

The history of the genome project shows how technology development can influence the course of discovery. Hardware and software were both needed and

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.

Cyberinfrastructure

The term “cyberinfrastructure” refers not only advanced scientific computing but also a comprehensive infrastructure for research and education based upon networks of computers, databases, on-line instruments, and human interfaces. Cyberinfrastructure is increasingly required to understand global climate change, protect our natural environment, apply genomics-proteomics to human health, maintain national security, master the world of nanotechnology, and predict and protect against natural and human disasters. All fields of science from physics to social sciences rely on databases (e.g., ICPSR and SPARC) for research.

were synergistic. The parallel development of sequencers and software demonstrates that not only key insights but also incremental improvements can make a qualitative difference in the progress of science.

Today, computers are vital tools to scientists and engineers. Indispensable for communication and often used in conjunction with many instruments, the computer can also be a scientific instrument itself. This section gives examples of three types of cybertools that are fundamental to several fields of research: software, data collections, and surveys.

Although one of the first scientific applications of digital computers in the 1940s was to try to predict the weather—with grants from the US Weather Service, the Navy, and the Air Force to John von Neumann at the Institute for Advanced Study at Princeton University—scientific applications software aimed at obtaining

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.

Gaussian and the Nobel Prize

In 1998, John A. Pople was awarded the Nobel prize in chemistry (his colaureate was Walter Kohn of the University of California, Santa Barbara). The citation for Professor Pople refered to “his development of computational methods in quantum chemistry.” The research “tool” that Professor Pople developed was the Gaussian program, which is now one of the most widely used tools in chemical research. The first version of Gaussian was released in 1970 and provided only limited ways of modeling molecular structure and processes. The last release of Gaussian, in 2003, is so advanced it can predict the structure and properties of many molecules to an accuracy that enables chemists to gain a better understanding of the problems they are investigating.

a better understanding of physical phenomena did not become widely available until the 1960s and 1970s. Scientists and engineers have since developed a broad array of scientific software applications that are acknowledged to be indispensable in the scientist’s toolkit—the software equivalents of the NMR spectrometer and other instruments described above. Examples of such applications software in use today are Gaussian, a molecular modeling code that is used by experimental and theoretical chemists to understand molecular structure and processes better and more easily by performing computer “experiments” rather than chemistry experiments; the Community Climate System Model, which is used by the climate research community to understand the evolution of past and future climates; and CHARMM and AMBER, used by the biomolecular community to understand the structure and dynamics of proteins and enzymes.

Scientific applications, such as Gaussian, often began as small research projects in the laboratory of a single investigator. However, as the capabilities of computers increased, the applications included models of the physical and chemical processes of higher and higher fidelity, and the software became more and more complex. Today, many of the scientific applications involve hundreds of thousands to millions of lines of code, took hundreds of person-years to design and build, and require substantial continuing support to maintain, port to new computers, and continue to evolve the capabilities of the software as new knowledge accumulates. The cost of developing major new scientific and engineering applications can be more than $10 million, and the cost of continuing maintenance, support, and evolution exceeds $2 million per year. Thus, these software applications are well within the category of advanced research instrumentation being considered in this report.

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.

Political Science Instrumentation

Instrumentation requirements in political science largely rest in the operation of major longitudinal data series and the maintenance of the institutional support for them, with the peak example being the National Election Studies sustained by the National Science Foundation. A second emerging area is the development of laboratory capacity for computerized political science research. A third area involves archiving, particularly of political communication materials, and the construction of meta-data. Virtual data center projects are also emerging, where marginal costs of use are free but development costs are very expensive. Future instrumentation needs could include: a network of exit polling to validate election outcomes; linking of political-administrative information systems with physical science systems to translate natural disaster warnings into effective systems for sharing life-saving information and implementing public safety plans; and developing collaborations between political scientists and other scientists in brain imaging and genomics.

Digital data collections also provide a fundamentally new approach to research. By gathering data generated in studies on related topics, digital data collections themselves become a new source of knowledge. One of the best examples of a large scientific database that is integral to progress in science and engineering is GenBank, the genetic sequence database maintained for the biomedical research community by NIH. GenBank was born at Los Alamos National Laboratory in 1982, well before the beginning of the Human Genome Project. When the Human Genome Project came into being and the number of available sequences exploded, GenBank became an indispensable repository for the data being generated. Today GenBank contains over 49 billion nucleotide bases in over 45 million sequence records, and the amount of data is increasing exponentially with a doubling time

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.

of less than two years. All sequencing data produced by the Human Genome Project must be deposited in GenBank before it can be published in the literature. Because of the unique role now being played by digital data collections in research and education, the NSB recently drafted a report on the subject, finding that such collections are used in most fields of science, from astronomy (as in the Sloan Digital Sky Survey) to biology (as in The Arabidopsis Information Resource). 6 It concluded that “digital data collections serve as an instrument for performing analysis with an accuracy that was not possible previously or, by combining information in new ways, from a perspective that was previously inaccessible.” The collections are often fundamental tools of the social sciences, housing extensive survey and census results and archived media.

Especially in the social sciences, the survey itself is a scientific instrument that can cost millions of dollars a year to maintain. Longitudinal surveys, large and often decades-long surveys, are analogs to the telescopes and microscopes of the other sciences. These surveys are not created by single investigators; they are often sources of basic data used by arrays of disciplines. Increasingly, surveys collect not only social data but also biomedical data (e.g., cheek swabs for DNA analysis) or are integrated with satellite down feeds or inputs from air quality sensors. The data from these surveys is expensive to collect and document as well as make publicly accessible to researchers while preserving anonymity and confidentiality. They are very expensive to collect in any given round let alone over time. They are also expensive to document and make accessible as public use files, preserving anonymity and confidentiality. Frequently, the data for such surveys can only be collected by one or two survey research centers in the country, such as the Institute for Social Research at the University of Michigan, that possess the ongoing human and local infrastructure to manage them at affordable scale.

Computational technology has advanced to the point where computers can be used as tools not only for remotely accessing databases and collaborating with other researchers but for remotely accessing and controlling scientific instruments. A 900-MHz virtual NMR facility at the Pacific Northwest National Laboratory, for example, supports a national community of users, roughly half of whom use the instrument remotely. The technology can improve and provide less expensive access to instruments for geographically remote users and can permit more effective use of instruments. The openness of this technology and the ability to book at all hours may be a means to generate more revenue from user fees and thus recoup the facility’s operation and maintenance expeditures.

National Science Board. Long-Lived Digital Data Collections: Enabling Research and Education in the 21st Century. NSB-05-40. March 30, 2005, Draft.

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.

Earth and Ocean Science Sensor Systems

Earth and ocean sciences rely on sensor systems, both to predict natural disasters and to learn about climate, natural phenomena, and weather. Ocean sensors are essential for the predicting when and where a tsunami will strike. New seismometer technology is making earthquake predictions and immediate, accurate damage assessment possible. Orbiting satellites are a part of tsunami and earthquake sensor systems, and are essential for predicting dangerous weather including hurricanes and tornadoes.

Not all advanced research instrumentation is housed in laboratories. Progress in the physics underlying the technological development of modern scientific instruments and their associated cybertools has given rise to an unprecedented explosion in the scope of basic research in the geosciences and biosciences that relies on field observations. Atmospheric scientists, oceanographers, geophysicists, and ecologists are now tackling and solving fundamental problems that require analysis of large numbers of observations that are both time- and space-dependent. Some of the sensors and tools required to make the necessary measurements can be deployed on familiar mobile instrumental platforms, such as oceanographic ships, research aircraft, and earth-orbiting satellites; but many need to be distributed in sensor networks of local, regional, or even global scale. Both physical and wireless networks can be used to transmit data to off-site storage facilities.

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.

A good example of a distributed sensor network is the Global Seismographic Network (GSN), which consists of 130 seismic stations distributed on continental landmasses, oceanic islands, and the ocean bottom. GSN recording and nearly real-time distribution of seismic-wave parameters measurements at numerous sites over the globe serve the needs of basic research in geophysics (such as seismic tomography of the earth’s interior structure) and of applied geosciences (such as earthquake and tsunami monitoring and seismic monitoring of nuclear testing).

Seismometers were originally developed to study earthquakes, but their modern versions, deployed in geographically distributed networks, record data that can be processed with sophisticated computing methods to produce images of the solid earth. The resulting “seismic imaging” is science’s most important source of knowledge about the structure of the earth’s interior and its consequences for humanity with respect to, for example, mineral and energy resources, earthquakes and volcanic hazards.

Today’s seismograph system takes advantage of modern off-the-shelf hardware for many of its components. Global positioning system (GPS) receivers provide the accurate timing required, off-the-shelf electronic amplifiers generate little noise or distortion, and commercial analog-to-digital converters with true 24-bit or higher resolution are an improvement over custom-designed “gain-ranging” systems; but the primary sensor of a modern seismometer still requires unique design to meet a combination of stringent requirements. To detect the smallest signals above the earth’s background “hum,” the self-excitation of the pendulum sensor by Brownian noise must be less than that caused by shaking the instrument’s foundation with an acceleration of 1 nm/s 2 across a wide frequency band of 10 −4 -100 Hz. Furthermore, to make faithful records of the largest earthquakes, the response across the same frequency band must be linear up to excitation amplitudes that are 10 12 times greater than the smallest detectable signals. No company in the United States produces sensors with those capabilities, and no US universities train engineers in seismometer design.

Environmental sensor systems must often be installed in remote field locations, and this poses difficulties not encountered in housing instruments in a laboratory setting. For example, seismometers must be installed in ways that isolate them from drafts, temperature changes, and ambient noise and that protect them from damage by animals and vandalism. Low-power, rugged, and high-capacity data-storage systems are required for remote locations where energy must be provided by fuel cells or batteries recharged by solar or wind-based systems. In locations where Internet service is not available, transmission of data must take advantage of satellites or other telemetry technologies that can substantially add to costs. Although data transmission and interrogation of routine instrument functions can be dealt with remotely, periodic maintenance by technicians is needed

Suggested Citation:"2 Introduction to Instrumentation." National Academy of Sciences, National Academy of Engineering, and Institute of Medicine. 2006. Advanced Research Instrumentation and Facilities. Washington, DC: The National Academies Press. doi: 10.17226/11520.